Alternatives to OpenMark AI

OpenMark AI instantly benchmarks over 100 AI models on your exact task to find the best one for cost, speed, and quality.

Explore 20 alternatives to OpenMark AI. Compare features, pricing, and find the best fit for your needs.

LoadTester

LoadTester unlocks your team's potential by transforming HTTP and API load testing into a game-changing, zero-infrastructure experience.

ProcessSpy

ProcessSpy unlocks advanced macOS process monitoring with powerful filtering, real-time insights, and native performance.

Claw Messenger

Claw Messenger empowers your AI agent with its own iMessage number for seamless, instant communication across any platform.

Datamata Studios

Datamata Studios empowers developers and data professionals with essential tools and insights to elevate their skills and automate their workflows.

Requestly

Requestly is a fast, git-based API client that simplifies collaboration and testing without the hassle of logins or bloat.

OGimagen

OGImagen transforms your content into perfect, AI-generated social images and ready-to-paste meta tags in seconds.

qtrl.ai

qtrl.ai scales QA with autonomous AI agents while ensuring full team control and governance.

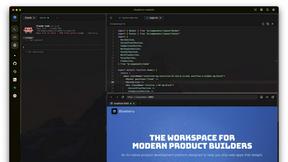

Blueberry

Blueberry unifies your code editor, terminal, and browser into one powerful workspace for seamless web app development.

Lovalingo

Lovalingo enables effortless, zero-flash translation of React apps into 20+ languages in just 60 seconds.

HookMesh

Elevate your SaaS with HookMesh for seamless, reliable webhook delivery and effortless customer management.

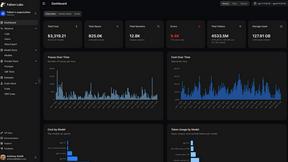

Fallom

Fallom unlocks AI's full potential with real-time observability for every LLM call and agent.

diffray

Diffray transforms code review with multi-agent AI, expertly identifying real bugs while minimizing false positives.

CloudBurn

CloudBurn prevents costly AWS mistakes by showing infrastructure cost estimates in every pull request.

Skene

Skene empowers your codebase to drive growth effortlessly with AI, transforming it into a product-led growth engine you.

Agenta

Agenta transforms LLM development by centralizing workflows for collaboration, evaluation, and reliable AI app creation.

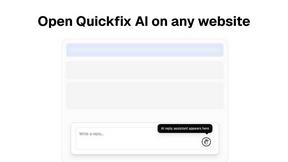

Quickfix AI

Quickfix AI instantly diagnoses system issues and provides clear, actionable insights to enhance performance.

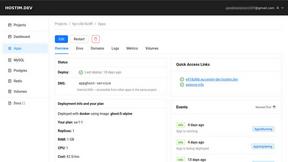

Hostim.dev

Hostim.dev transforms Docker deployment with built-in databases and predictable EU-hosted pricing.

Prefactor

Prefactor empowers you to govern AI agents at scale with real-time visibility, compliance, and identity-first control.

About OpenMark AI Alternatives

OpenMark AI is a transformative developer tool for task-level LLM benchmarking. It empowers teams to make data-driven decisions by running real prompts against a vast catalog of models, comparing critical metrics like cost, latency, quality, and output stability in a single, unified session. Users often explore alternatives for various reasons, such as budget constraints, the need for different feature sets like on-premise deployment, or a preference for integrating benchmarking directly into their existing development workflow. The landscape of AI evaluation tools is rapidly evolving, offering different approaches to a common challenge. When evaluating alternatives, focus on what truly matters for your project. Key considerations include whether the tool provides real, non-cached API results, the breadth and depth of the model catalog, the granularity of performance and stability metrics, and how the platform aligns with your team's workflow and security requirements.

FAQs about OpenMark AI Alternatives

What is OpenMark AI?

OpenMark AI is a web application for task-level LLM benchmarking that runs your prompts against 100+ models to compare cost, speed, quality, and stability.

Who is OpenMark AI for?

It is built for developers and product teams who need to choose or validate a model before shipping an AI feature, focusing on cost efficiency and real-world performance.

Is OpenMark AI free?

OpenMark AI offers both free and paid plans, with details available in the application's billing section.

Why choose OpenMark AI?

It provides side-by-side results from real API calls, showing variance across runs so you see consistency, not just a single lucky output, for confident pre-deployment decisions.