Hostim.dev vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

Hostim.dev

Hostim.dev transforms Docker deployment with built-in databases and predictable EU-hosted pricing.

Last updated: March 1, 2026

OpenMark AI instantly benchmarks over 100 AI models on your exact task to find the best one for cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

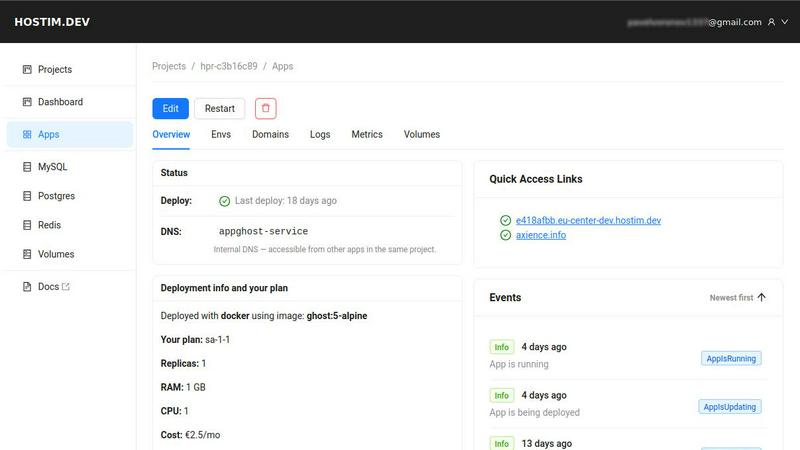

Hostim.dev

OpenMark AI

Feature Comparison

Hostim.dev

Effortless Deployment from Any Source

Unlock instant deployment pipelines directly from your existing workflow. Hostim.dev accepts your application as a Docker image, a Git repository for automatic builds, or a comprehensive Docker Compose file that defines your multi-service stack. This flexibility means you can deploy complex applications with databases and caches in mere minutes, completely bypassing the need to write complex Kubernetes YAML or configure CI/CD pipelines from scratch. It's the ultimate shortcut from development to a live, production-ready environment.

Built-in Managed Services & Persistent Storage

Transform your deployment experience with instantly provisioned, fully managed core services. With one click, spin up production-ready MySQL, PostgreSQL, and Redis instances that are automatically pre-wired to your application with secure environment variables. Coupled with provisioned persistent volumes for your data, this feature eradicates the time-consuming and error-prone process of manually setting up, securing, and connecting databases, giving you a complete, integrated application stack from the moment you deploy.

Secure, Isolated Projects by Default

Gain peace of mind with a security-first architecture. Every application on Hostim.dev runs within its own isolated Kubernetes namespace, ensuring complete separation of resources, networks, and data between your projects or client work. This is complemented by automatic TLS/SSL certificate provisioning for every service, ensuring HTTPS is enabled from the start. The platform provides live logs, metrics, and monitoring out of the box, creating a transparent and secure operational environment without any extra configuration.

Transparent EU Bare-Metal Hosting

Break free from opaque cloud pricing and data residency concerns. Hostim.dev runs on high-performance bare-metal servers in Germany, offering robust, GDPR-compliant hosting by default. This provides superior performance and predictability compared to virtualized cloud environments. Combined with a clear, per-project hourly billing model and cost-tracking dashboard, you get the power of premium infrastructure with the simplicity and transparency of flat, predictable pricing, starting from just €2.5 per month.

OpenMark AI

Plain Language Task Description

Transform your workflow requirements into actionable benchmarks without writing a single line of code. Simply describe the task you want to test—from creative writing and translation to complex data extraction—in natural language. The platform's intuitive editor guides you in defining the task, enabling you to validate and run benchmarks in minutes, making advanced testing accessible to everyone on your team.

Multi-Model Benchmarking in One Session

Unlock unprecedented efficiency by testing the same prompt against a vast selection of 100+ leading LLMs simultaneously. This side-by-side comparison happens in a single, cohesive session, eliminating the need to manually switch between different provider dashboards and APIs. You get a unified view of performance, allowing for direct and immediate model-to-model analysis on your specific task.

Real Cost, Latency & Stability Metrics

Move beyond theoretical datasheets. OpenMark AI executes real API calls to each model, providing tangible metrics on actual cost per request and true latency. Its game-changing differentiator is measuring stability across repeat runs, showing you the variance in outputs. This reveals which models are consistently reliable versus those that just got lucky once, a critical factor for production applications.

Hosted Platform with Credit System

Accelerate your benchmarking journey by bypassing complex API key management. The platform operates on a simple credit system, so you don't need to configure or fund separate accounts with OpenAI, Anthropic, Google, or other providers. This hosted approach means you can start comparing models instantly, with all billing and infrastructure handled seamlessly through OpenMark AI.

Use Cases

Hostim.dev

Rapid Prototyping and MVP Launch for Startups

For startups racing to validate an idea, Hostim.dev is a transformative launchpad. Developers can take a backend built with Express.js, Django, FastAPI, or Spring Boot, define the stack in a Docker Compose file, and have a live, scalable MVP online in under an hour. With built-in databases and automatic HTTPS, the focus remains entirely on product development and user feedback, not on configuring servers, databases, or security policies, dramatically accelerating the path to market.

Client Project Management for Freelancers & Agencies

Agencies and freelancers can revolutionize their delivery and billing process. Each client project can be deployed as a separate, isolated environment on Hostim.dev, ensuring security and clean separation. The per-project cost tracking allows for precise, transparent billing to clients. This model simplifies handovers, as the entire application stack is managed in one simple interface, eliminating the complexity of server access and maintenance typically associated with client work.

Learning and Portfolio Building for Students

Hostim.dev unlocks real-world experience for students and aspiring developers. It provides a risk-free, professional platform to deploy full-stack applications using Docker, real databases, and modern frameworks. The free trial and student credits allow learners to move beyond localhost, building a portfolio of live, publicly accessible projects that demonstrate practical skills with industry-standard deployment tools and managed services.

Simplified SaaS Application Hosting

SaaS builders can leverage Hostim.dev to host their applications with ease and compliance. The platform's EU-based, GDPR-compliant infrastructure is ideal for serving European customers. The ability to scale CPU and RAM directly from the UI with zero downtime, coupled with the isolation per project (which can represent different tenants or application modules), provides a scalable, secure, and maintainable foundation for growing a software-as-a-service business without a dedicated DevOps team.

OpenMark AI

Pre-Deployment Model Selection

Before integrating an AI feature into your product, definitively determine which model delivers the optimal balance of quality, cost, and speed for your specific use case. Test candidate models on your actual task prompts to see which one "actually gets it right," ensuring you ship with the most effective and efficient AI engine from day one.

Cost Efficiency Optimization for Scaling

When scaling an AI-powered feature, understanding the true cost dynamics is transformative. Benchmark models to analyze the trade-off between output quality and the actual price per API call. This allows teams to optimize for cost efficiency, potentially saving thousands by selecting a model that delivers nearly identical quality at a significantly lower operational expense.

Validating Output Consistency & Reliability

For applications where consistent, reliable outputs are non-negotiable—such as automated data entry, customer support responses, or content moderation—test model stability. OpenMark AI's repeat-run analysis shows variance, helping you identify and avoid models with high volatility, ensuring your users receive dependable and predictable performance every time.

Prototyping & Research for New AI Workflows

Rapidly prototype new AI capabilities by testing a wide range of models on novel tasks like complex agent routing, specialized research Q&A, or image analysis prompts. This exploratory benchmarking provides immediate, empirical data on what is possible, accelerating the research phase and informing architectural decisions without upfront API commitments.

Overview

About Hostim.dev

Hostim.dev is a revolutionary bare-metal Platform-as-a-Service (PaaS) that fundamentally transforms how developers deploy and manage containerized applications. It is engineered to eliminate the notorious complexity of DevOps and cloud infrastructure, unlocking unprecedented speed and simplicity. The platform empowers you to go from code to production in minutes by deploying directly from a Docker image, Git repository, or a full Docker Compose file. Hostim.dev intelligently automates the entire stack, provisioning and seamlessly wiring together essential services like MySQL, PostgreSQL, Redis, and persistent storage. Every project is secured with automatic HTTPS, lives in an isolated Kubernetes namespace for safety, and benefits from transparent, per-second billing for ultimate cost predictability. With its robust, GDPR-compliant infrastructure hosted in Germany, Hostim.dev is the game-changing solution for developers, freelancers, startups, and agencies who demand a powerful, transparent, and controlled deployment experience without the administrative nightmare.

About OpenMark AI

Stop gambling on AI model selection. OpenMark AI is the definitive, game-changing platform for task-level LLM benchmarking, designed to eliminate guesswork before you ship. This transformative web application empowers developers and product teams to make data-driven decisions by testing AI models against their exact, real-world tasks. Simply describe what you need in plain language—be it classification, data extraction, RAG, or agent routing—and run comprehensive benchmarks across a vast catalog of 100+ models in a single, unified session. The platform delivers side-by-side comparisons of critical metrics like real API cost per request, latency, scored output quality, and, uniquely, stability across repeat runs. This reveals performance variance, ensuring you see consistent reliability, not a single lucky output. By using a hosted credit system, OpenMark AI removes the immense friction of configuring separate API keys for OpenAI, Anthropic, Google, and others, delivering genuine, uncached results from real API calls. It's built for those who prioritize cost efficiency (maximizing quality for your budget) over just the cheapest token price on a datasheet, fundamentally unlocking smarter, more confident pre-deployment AI decisions.

Frequently Asked Questions

Hostim.dev FAQ

What does the free tier include?

Hostim.dev offers a generous 5-day free trial that requires no credit card for signup. This trial allows you to create a full project with all platform features, including deployment from Docker/Git/Compose, built-in services, and automatic HTTPS. It's a complete, unrestricted experience designed for you to fully test your application stack. Additionally, certain managed services like databases have their own free usage tiers, making it possible to run small projects at a very low cost.

Can I deploy with just a Docker Compose file?

Absolutely. Deploying with a Docker Compose file is a core, game-changing feature of Hostim.dev. You can simply paste your existing docker-compose.yml file into the platform's dashboard. Hostim.dev will intelligently interpret it, provision any defined services (or use its built-in managed ones), set up internal networking, and deploy your entire multi-container application stack to a live, isolated environment in minutes, with no manual infrastructure work required.

Where is my app hosted?

All applications on Hostim.dev are hosted on high-performance bare-metal servers located in secure, state-of-the-art data centers in Germany. This ensures your application benefits from the raw power of dedicated hardware while guaranteeing that all data is stored and processed within the European Union, making it inherently compliant with GDPR regulations by default—a critical advantage for businesses serving the EU market.

Do I need to know Kubernetes or DevOps?

Not at all. Hostim.dev is specifically designed to abstract away the complexity of Kubernetes and traditional DevOps. The platform uses Kubernetes under the hood to deliver robustness and isolation, but you never need to interact with it, write YAML, or understand its concepts. You manage your applications through a simple, intuitive UI or CLI, focusing solely on your code while Hostim.dev handles all the underlying infrastructure, orchestration, and operational complexities for you.

OpenMark AI FAQ

How does OpenMark AI calculate costs?

OpenMark AI calculates costs by making real API calls to the model providers during your benchmark. It tracks the exact token usage (input and output) for each model on your specific task and applies the provider's latest public pricing. This gives you the actual, real-world cost per request, not an estimate or marketing number, for accurate financial planning.

What does "stability" or "variance" testing mean?

Stability testing refers to running the same task multiple times (in repeat runs) with the same model and prompt. OpenMark AI measures how much the outputs vary across these runs. Low variance indicates a stable, predictable model, while high variance suggests unreliable or "flaky" performance. This metric is crucial for production applications where consistency is key.

Do I need my own API keys to use OpenMark AI?

No, you do not need to configure or manage any external API keys. OpenMark AI operates on a hosted credit system. You purchase credits through the platform, and it handles all the API calls to providers like OpenAI, Anthropic, and Google on your behalf. This removes setup friction and allows for seamless, multi-provider benchmarking in one place.

What kind of tasks can I benchmark?

You can benchmark virtually any task that can be described in language. Common use cases include text classification, summarization, translation, data extraction from documents, question answering for RAG systems, agentic workflow routing, creative writing, code generation, and image analysis (for vision-capable models). The platform is designed to adapt to your unique requirements.

Alternatives

Hostim.dev Alternatives

Hostim.dev is a transformative bare-metal Platform-as-a-Service (PaaS) that redefines containerized app deployment. It belongs to the category of developer-centric cloud platforms, designed to strip away DevOps complexity and unlock rapid, secure deployment directly from Docker or Git. Developers may explore alternatives for various strategic reasons. Needs evolve, and factors like specific geographic hosting requirements, budget constraints, or the need for different integrated services can prompt a search. The quest often balances simplicity against granular control, or predictable pricing against different scaling models. When evaluating other platforms, key considerations include the deployment workflow's simplicity, the transparency and structure of pricing, the robustness of built-in services like databases, and the underlying infrastructure's security and compliance standards. The goal is to find a solution that matches your project's technical demands and operational philosophy.

OpenMark AI Alternatives

OpenMark AI is a transformative developer tool for task-level LLM benchmarking. It empowers teams to make data-driven decisions by running real prompts against a vast catalog of models, comparing critical metrics like cost, latency, quality, and output stability in a single, unified session. Users often explore alternatives for various reasons, such as budget constraints, the need for different feature sets like on-premise deployment, or a preference for integrating benchmarking directly into their existing development workflow. The landscape of AI evaluation tools is rapidly evolving, offering different approaches to a common challenge. When evaluating alternatives, focus on what truly matters for your project. Key considerations include whether the tool provides real, non-cached API results, the breadth and depth of the model catalog, the granularity of performance and stability metrics, and how the platform aligns with your team's workflow and security requirements.