Fallom vs qtrl.ai

Side-by-side comparison to help you choose the right AI tool.

Fallom unlocks AI's full potential with real-time observability for every LLM call and agent.

Last updated: February 28, 2026

qtrl.ai

qtrl.ai scales QA with autonomous AI agents while ensuring full team control and governance.

Last updated: March 4, 2026

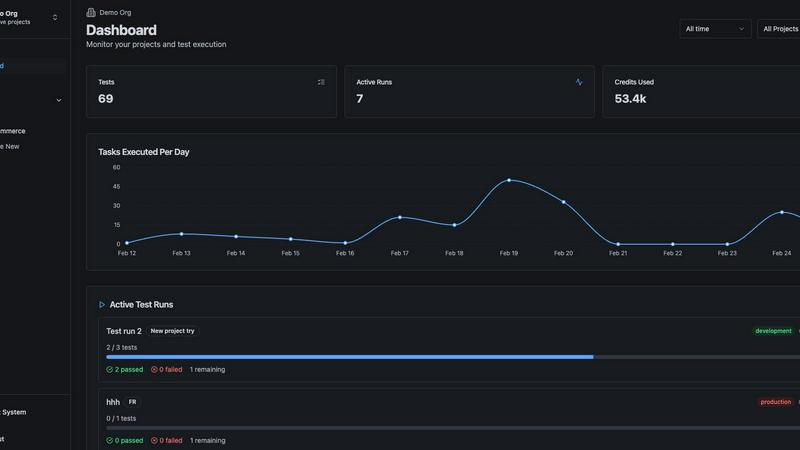

Visual Comparison

Fallom

qtrl.ai

Feature Comparison

Fallom

End-to-End LLM Tracing & Live Dashboard

Gain real-time, granular visibility into every LLM interaction within your applications. Fallom's live dashboard displays a comprehensive trace of each call, showing the exact input prompt, the model used, token counts (in/out), precise cost, and latency—all updating in real time. This allows you to monitor the health and performance of your AI agents live, spot anomalies as they happen, and drill down into any call for immediate debugging, transforming opaque AI operations into a transparent, manageable system.

Granular Cost Attribution & Analytics

Take control of your AI spending with Fallom's powerful cost attribution engine. Break down your total LLM expenditure by model, individual user, team, or even specific customer. The platform provides clear visualizations and detailed reports, enabling precise budgeting, internal chargebacks, and identifying cost-optimization opportunities. You can instantly see which models or features are driving your bill, empowering data-driven decisions to improve efficiency without sacrificing performance.

Enterprise Compliance & Audit Trails

Built for regulated industries, Fallom ensures your AI deployments are audit-ready. It maintains complete, immutable audit trails of every LLM interaction, logging inputs, outputs, model versions, and user context. This is essential for meeting stringent regulatory requirements like the EU AI Act, GDPR, and SOC 2. Features like configurable privacy modes allow you to redact sensitive data while retaining full telemetry, balancing powerful observability with strict data governance and security standards.

Advanced Debugging with Timing Waterfalls & Tool Visibility

Debug complex, multi-step AI agent workflows with confidence. Fallom's timing waterfall visualizations break down the exact sequence and duration of each step in an agent's execution—from LLM calls and tool executions (like database queries or API calls) to final response formatting. Coupled with complete visibility into every tool call's arguments and results, you can pinpoint latency bottlenecks, diagnose logic errors, and optimize the performance of your most sophisticated AI chains.

qtrl.ai

Autonomous QA Agents

qtrl's intelligent agents execute high-level instructions on demand or continuously, operating within your strictly defined rules. They perform real browser execution—not mere simulations—across multiple environments at immense scale. This feature provides the power of automation with the crucial oversight that engineering teams demand, ensuring actions are predictable, reviewable, and fully aligned with your testing strategy.

Enterprise-Grade Test Management

This foundational feature provides a centralized, structured hub for all QA activities. It encompasses comprehensive test case libraries, detailed test plans, and organized test runs with full traceability from requirements to execution. Built with compliance and auditability in mind, it supports both manual and automated workflows, giving managers and leads crystal-clear visibility into coverage, status, and potential quality risks.

Progressive Automation

qtrl champions a human-in-the-loop evolution. Teams start by writing plain-English test instructions for the AI to execute. When ready, they can transition to having qtrl automatically generate and run tests based on connected requirements, with every suggestion fully reviewable and refinable. The platform proactively analyzes coverage gaps and proposes new tests, allowing automation to grow intelligently under your complete control.

Adaptive Memory & Multi-Environment Execution

The platform's Adaptive Memory builds a living, evolving knowledge base of your application by learning from every exploration, test run, and identified issue. This powers context-aware, smarter test generation over time. Coupled with robust multi-environment execution capabilities—supporting dev, staging, and prod with per-environment variables and encrypted secrets—teams can ensure consistent, secure testing across the entire development lifecycle.

Use Cases

Fallom

Monitoring Production AI Customer Support Agents

Ensure your AI-powered customer support chatbots and agents are performing reliably and cost-effectively in live environments. Fallom allows you to trace every customer interaction, monitor response accuracy and latency in real-time, attribute costs per customer or support ticket, and quickly debug failed conversations or unexpected tool calls, leading to higher customer satisfaction and controlled operational costs.

Optimizing Cost and Performance for Internal Copilots

Deploy internal developer or business copilots with full financial and operational oversight. Use Fallom to track which teams and individuals are using the AI tools, analyze the cost-per-query for different types of tasks, and A/B test different models or prompts to find the optimal balance of intelligence and expense. This transforms an opaque utility into a managed, optimized service with clear ROI.

Ensuring Compliance for Financial or Legal AI Applications

Safely deploy AI in highly regulated sectors like finance, healthcare, or legal services. Fallom's comprehensive audit trails provide the necessary documentation for every AI-generated piece of advice, analysis, or content. Privacy controls allow sensitive data handling, and detailed logs support regulatory reviews, enabling innovation while maintaining rigorous compliance and risk management standards.

Debugging Complex Multi-Agent Workflow Systems

Troubleshoot and refine intricate systems where multiple AI agents collaborate or perform sequential tasks (e.g., a research agent that searches, analyzes, and then drafts a report). Fallom's session tracking groups all related traces, while timing waterfalls visually expose bottlenecks between agents or tools, allowing engineers to systematically improve the reliability and speed of these advanced AI architectures.

qtrl.ai

Scaling Beyond Manual Testing

For QA teams overwhelmed by repetitive manual test cycles, qtrl provides the transformative leap forward. It allows teams to maintain their structured, manual test management foundation while progressively introducing AI automation. They can start by having agents execute manual test instructions, then gradually shift to AI-generated test maintenance and creation, dramatically increasing coverage and speed without a disruptive, all-at-once overhaul.

Modernizing Legacy QA Workflows

Companies burdened with outdated, siloed, or script-heavy automation frameworks find a game-changing alternative in qtrl. The platform seamlessly integrates modern test management with intelligent agents, replacing brittle scripts with maintainable, English-language instructions. This breaks down legacy complexity, reduces maintenance costs, and injects adaptive intelligence into stagnant workflows, enabling a smooth transition to a contemporary QA practice.

Governing Enterprise AI Testing

Enterprises with strict compliance, audit, and security requirements can safely harness AI for QA with qtrl. Its governance-by-design philosophy ensures no black-box decisions, with full visibility into agent actions, permissioned autonomy levels, and enterprise-ready security. This allows large organizations to achieve the efficiency gains of AI-powered testing while maintaining the rigorous control, traceability, and audit trails they mandate.

Empowering Product-Led Engineering Teams

Product-led engineering teams that prioritize rapid iteration and user-centric quality can embed qtrl directly into their development lifecycle. The platform supports CI/CD integration and provides continuous quality feedback. Engineers and product managers can define high-level feature behaviors in plain English, and qtrl's agents can automatically generate and run the corresponding tests, ensuring new releases meet quality standards without slowing down the development pace.

Overview

About Fallom

Fallom is the game-changing AI-native observability platform that is transforming how organizations build, deploy, and manage production-grade Large Language Model (LLM) applications. Designed from the ground up for the unique challenges of AI agents and complex LLM workflows, Fallom delivers unparalleled, end-to-end visibility into every interaction. It empowers engineering and AI teams to move beyond guesswork, providing a crystal-clear lens into prompts, outputs, tool calls, token usage, latency, and the precise cost of every single LLM call. This transformative visibility is critical for teams that demand reliability, performance, and cost control from their AI systems. With its powerful, OpenTelemetry-native SDK, you can instrument your entire AI stack in under five minutes, unlocking live monitoring, instant debugging, and granular cost attribution across models, users, and teams. Fallom goes beyond basic metrics, offering enterprise-grade features like session-level context, timing waterfalls for multi-step agents, comprehensive audit trails for compliance, and robust testing frameworks. By providing a single pane of glass for your AI operations, Fallom unlocks the full potential of your LLM investments, enabling you to ship with confidence, optimize relentlessly, and scale intelligently.

About qtrl.ai

qtrl.ai is a transformative AI-powered QA platform that shatters the traditional compromise between speed and control in software testing. It is a game-changing solution designed for engineering and QA teams who are serious about scaling their quality assurance efforts without descending into the chaos of brittle automation or the unpredictability of black-box AI. qtrl provides a unified command center, merging enterprise-grade test management with intelligent, trustworthy automation. This powerful combination allows teams to meticulously organize test cases, plan and execute runs, trace requirements to coverage, and monitor quality through real-time dashboards—all from a single, governed hub.

The platform's true genius lies in its progressive approach to AI. Instead of forcing a risky, all-or-nothing adoption, qtrl introduces intelligent automation gradually and transparently. Teams begin with a solid foundation of manual test management and can confidently evolve to leverage autonomous AI agents. These agents can generate robust UI tests from simple English instructions, maintain them through application changes, and execute them at scale across any browser or environment. Built for product-led engineering teams, modernizing QA groups, and compliance-focused enterprises, qtrl.ai delivers a trusted path to faster, more intelligent quality assurance, finally bridging the gap between slow manual processes and complex, fragile automation scripts.

Frequently Asked Questions

Fallom FAQ

How quickly can I integrate Fallom into my existing application?

Integration is remarkably fast. Fallom uses a single, OpenTelemetry-native SDK designed for minimal friction. For most applications, you can be up and running with full tracing in under five minutes. Simply install the SDK, add a few lines of configuration code to instrument your LLM calls, and your data will begin flowing to the Fallom live dashboard immediately.

Does Fallom support all major LLM providers?

Yes, Fallom is built with an open, provider-agnostic philosophy. It works seamlessly with every major LLM provider, including OpenAI (GPT-4, GPT-4o), Anthropic (Claude), Google (Gemini), and open-source models. The single SDK abstracts away provider differences, giving you a unified observability layer across your entire AI stack with zero vendor lock-in.

How does Fallom handle sensitive or private user data?

Fallom is built with enterprise-grade security and privacy controls. It offers a configurable "Privacy Mode" that allows you to disable full content capture for sensitive interactions. You can choose to log only metadata (like token counts and latency) or apply redaction rules, ensuring you maintain full observability for debugging while protecting user confidentiality and meeting data privacy regulations.

Can I use Fallom for testing and evaluating my LLM prompts before deployment?

Absolutely. Fallom includes robust evaluation and testing features. You can run automated evaluations on LLM outputs against metrics like accuracy, relevance, and hallucination rates. The integrated Prompt Store allows for version control and A/B testing of different prompt variations, enabling you to catch regressions and scientifically deploy the highest-performing prompts to production with confidence.

qtrl.ai FAQ

How does qtrl.ai ensure control over the AI agents?

qtrl is built on a principle of "permissioned autonomy." You define the exact rules and boundaries within which the AI agents operate. Every action, from test generation to execution, is fully visible and reviewable in the platform. There are no hidden or black-box decisions; you maintain ultimate approval authority over what tests are created, what changes are made, and what gets executed, ensuring the AI earns trust through transparency.

Can qtrl.ai integrate with our existing development tools?

Yes, qtrl is designed for real-world workflows and offers built-in integration capabilities. It can connect with popular requirements management systems, version control, and CI/CD pipelines (like Jenkins, GitLab, GitHub Actions). This creates continuous quality feedback loops, allowing test execution to be triggered automatically by code commits or builds, and results to be fed back into your development process seamlessly.

What makes qtrl different from other "AI testing" tools?

Unlike tools that force a fully autonomous, AI-first approach which can feel risky and unpredictable, qtrl offers a progressive, trusted path. It combines robust test management with AI, allowing you to start simple and increase automation at your own pace. The focus is on augmenting human intelligence with AI assistance, not replacing it, and providing enterprise-grade governance that other tools lack.

Is my application data secure with qtrl's AI?

Absolutely. Security and data privacy are paramount. qtrl employs enterprise-ready security protocols. Crucially, for AI operations, your sensitive data like per-environment variables and encrypted secrets are never exposed to the AI agents. The system is designed to execute tests in defined environments without compromising sensitive information, ensuring your application data remains protected.

Alternatives

Fallom Alternatives

Fallom is a game-changing AI-native observability platform, a specialized tool in the development category designed to bring unprecedented visibility to LLM and agent operations in production. It transforms how teams track, debug, and optimize their AI systems with real-time insights into every interaction, cost, and performance metric. Organizations may explore alternatives for various reasons, such as aligning with specific budget constraints, integrating with an existing tech stack, or requiring different feature sets like custom reporting or on-premise deployment. The search for the right tool is a strategic step toward unlocking an AI system's full potential. When evaluating an alternative, focus on capabilities that match your transformative goals. Key considerations should include the depth of real-time tracing, granularity of cost attribution, strength of compliance and audit features, and the ease of integration. The ideal platform will not only monitor your AI but empower you to refine and scale it with confidence.

qtrl.ai Alternatives

qtrl.ai is a transformative platform in the automation and dev tools space, designed to empower QA teams. It uniquely combines enterprise-grade test management with a progressive, trustworthy layer of AI agents to scale testing intelligently. This approach bridges the critical gap between manual processes and brittle traditional automation, offering a governed path to faster release cycles. Users often explore alternatives for various reasons, such as specific budget constraints, the need for different feature integrations, or platform compatibility requirements. The search for the right tool is a strategic decision to find a solution that aligns perfectly with a team's unique workflow and maturity level. When evaluating options, key considerations include the balance of AI-powered automation with human oversight, the strength of core test management capabilities, and the platform's ability to provide clear audit trails and governance. The ideal choice unlocks potential without introducing unnecessary risk or complexity.